BetBoom: Lia resolves 59% of requests without an agent

80%

Of requests automated

59%

Closed by the bot

BetBoom is a licensed bookmaker operating in the Russian market since 2010. The brand runs over 400 clubs across Russia and 3 head offices in Moscow. Support agents handle an average of 132,000 unique users a month.

About the project

BetBoom, a bookmaker, came to us in 2024 with two pain points: long customer wait times and an impractical system for handling repetitive requests — agents answered every question by hand. After Lia's deployment, 87% of requests close on the bot, lifting team load and removing burnout.

Starting point

BetBoom had to handle a massive volume of requests — agent load was crushing. Before the AI bot, agents couldn't keep up with messages. The two top pain points were long customer wait times and an impractical system for handling repetitive questions.

Lia's brief: reduce load and message response time. The desired outcome was higher service quality and customer loyalty.

As the customer base grew, agents fell behind — they couldn't process messages while solving complex cases at the same time. Wait times rose.

More customers meant more requests per agent. Agents burned out on chat volume; engagement and the desire to actually help and resolve fell.

Goals

Reduce agent load.

Cut response time on customer messages.

Optimize the frequent-question handling system through automation.

Solution

Pulled BetBoom's customer-request corpus from the past several months.

Analyzed the requests and surfaced the highest-frequency ones. Repetitive answers on those drove burnout — that's where automation had to land first.

For every unique intent, authored Lia's response scenarios in the brand's tone of voice, anchored on the agents' scripts.

Trained Lia to identify the customer's primary intent — even when the message had errors, typos, or stuffed multiple requests into a single long block of text.

Built a scenario where Lia transfers to a human when she doesn't know the answer, or when the customer explicitly asks to chat with an agent.

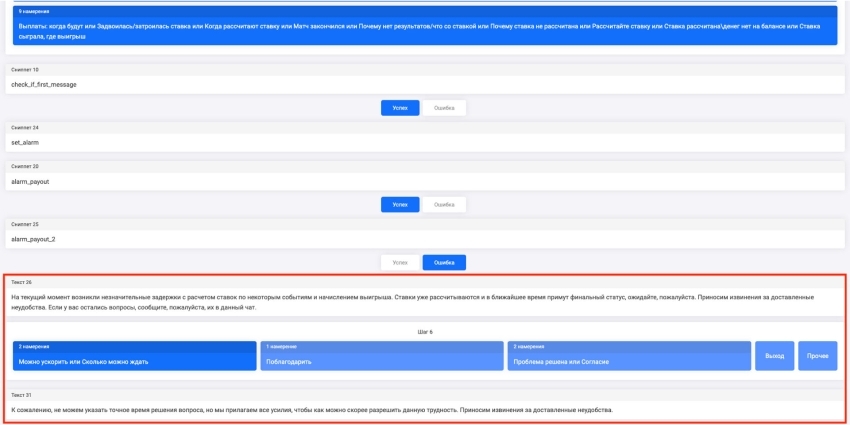

Deployed an alert mechanic for selected topics. How it works: when the service is overloaded due to volume, or BetBoom's team runs scheduled updates, agents already know what topics will spike. In those moments, behavior tuned for peak load activates. For example, when servers have issues, BetBoom's bot greets the customer alongside an additional message: "Sorry — we're doing technical work, please come back later." That mechanic absorbs a huge volume of requests and handoffs.

Scripted response with the alert disabled

Scripted response with the alert active (one variant)

Added instant FAQ answers: the most relevant topics surface as buttons at the start of the chat.

Combined a button-driven flow with free-text entry. Users could either pick a frequent question from the bot's button list and get an instant answer, or type their question in free text if no button matched.

Request and process analysis

To train the AI, we ingested several thousand phrasings and conversation scripts from BetBoom agent–customer interactions.

Omnidesk integration

Lia was connected to BetBoom's site chat and the Telegram support chat. After several months of operation, coverage (sessions without an agent + sessions with scripted handoff to an agent) reached 87%.

Building the project on top topics

The Lia-for-BetBoom project ran in stages:

1. Identified the highest-frequency request topics.

Our team partnered closely with BetBoom's customer-care org. First we pulled the customer-request corpus for the past several months. Analysts studied the requests and surfaced the top ones. Those were what bots had to automate. 53 core user requests were defined:

Top up balance.

Change phone number.

Cashback credit.

Sign-up freebet.

Remove a card.

And so on.

2. Studied agent message-handling scripts.

To make Lia handle requests the way an agent would, analysts had to bake that logic into her response scenarios. To do that, our specialists studied the scripts BetBoom agents work from.

3. Implemented those scenarios in Lia's logic.

Our team built the question-and-answer logic and wrote the bot copy from a structured spec. The brand tone of voice was preserved, making the conversation with Lia as close to talking to an agent as possible.

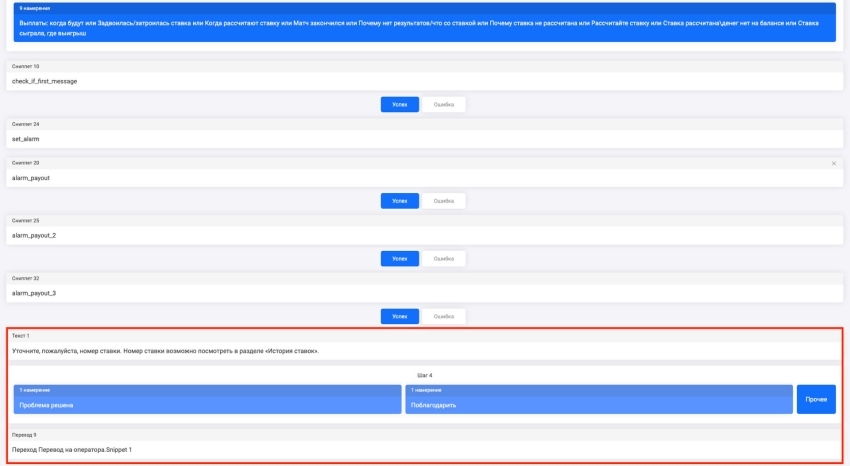

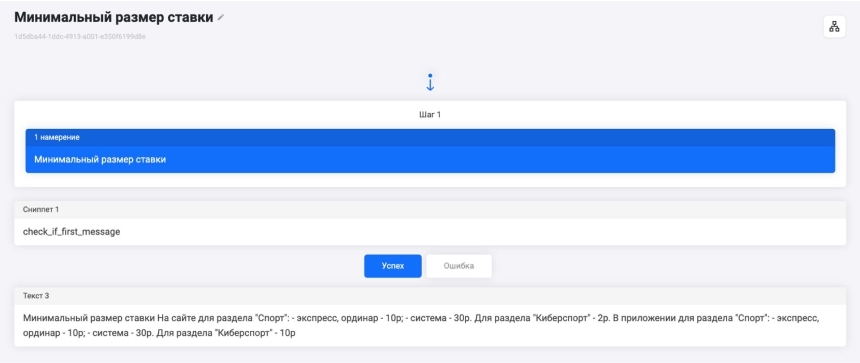

For example, customers often want answers about bets. An agent in that case would ask which specific question they have and walk through the resolution options. Lia asks the same questions, reacting to the customer's chosen answer paths.

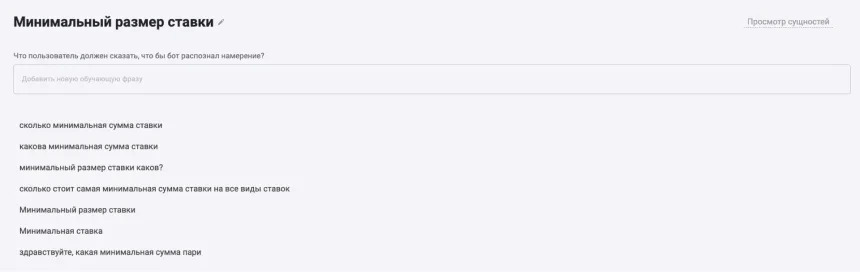

Lia's scenario answering a question about minimum bet size

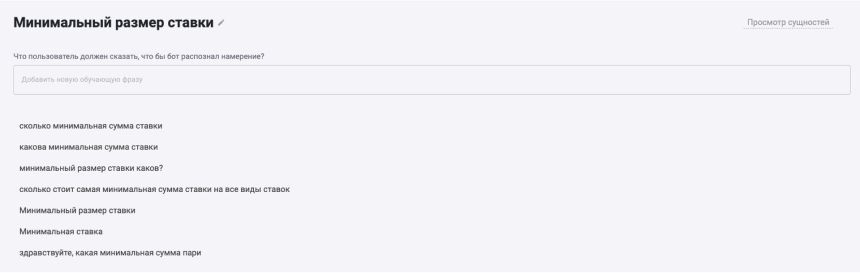

Analysts split every request into topics, each containing 50 or even 100 phrasings of the same question — including errors and typos.

4. Reflected agent scripts in Lia's logic

Our analysts studied the scripts BetBoom agents worked from and reflected them in the bot's customer responses.

Lia's text became as close to the agent voice as possible — increasing customer trust in support.

Example of an intent containing different phrasings of the same question

Customer request flow

At level 1, the user can tap a button for one of the most frequent questions and get an instant answer.

If that's not enough, at level 2 they can message Lia directly with their question. All requests are handled automatically. BetBoom specialists can review every bot-handled request.

At level 3, if Lia couldn't answer or the customer wanted to talk to a human, the conversation is routed to the right BetBoom specialist.

The customer can always choose to chat with a live agent — they're routed to the right specialist after a few clarifying questions.

The agent immediately sees the full conversation history with the bot. No need to ask clarifying questions — they go straight to the issue and answer.

Fine-tuning existing topics and labeling new ones

The BetBoom team continues to track messages where Lia couldn't answer on her own and/or escalated to an agent.

Analysts independently author response scenarios for questions that can be additionally automated, reducing Lia's handoffs to the betting service's staff. That cuts agent load and lifts engagement, since people answer the genuinely complex and non-standard issues — not the templated ones.

After Lia went live, BetBoom's leadership measured the unit economics and found that handling one complex request costs at least 3× less. Lia's answers on simple, frequently asked questions are dramatically cheaper than agents handling them.

Results of Lia's deployment

The Lia-into-BetBoom-bots project was delivered turnkey by our specialists.

From the customer we needed only the request corpus from the past few months — that's what we analyzed to surface user questions and split them into scenarios.

Everything else was on us — from development to deployment. BetBoom specialists didn't touch configuration or technical issues — they got a working communication tool out of the box.

With Lia, BetBoom handles more customer requests without scaling support headcount. The AI answered questions multiple times faster than any agent and handled several requests in parallel — not always possible for a human. Agents stopped burning out and are pulled into conversations only for non-standard cases.

If you don't want to fall behind market leaders, switch to AI-powered chat work now. Lia's typical implementation in mid-market and SMB takes about 3 days; the investment recoups within the first 2–3 months.

For a free consultation with our team, leave a request in the form below. Our manager will follow up on a video call at your convenience and:

identify bottlenecks in your sales operation;

walk through which processes Lia can optimize;

break down how Lia helps you grow margin;

explain pricing tiers and implementation options for your business.