Localrent: 5x faster customer response time

x5

Faster issue resolution

80%

Saved per request

68%

Support payroll savings

Localrent is an aggregator of rental companies across 17 countries beyond Russia. Operating since 2011, it serves 30,000+ customers a year worldwide. Per month, agents handle requests from 5,000 unique users.

About the project

The car-rental aggregator came to us in late 2021. Lia's job: reduce agent load to prevent burnout from repetitive questions. The result: 100% of first responses to customers were automated, and agents now handle non-standard cases instead of routine ones — and respond 5× faster.

Starting point

Before our partnership, Localrent routed every message to an agent — driving response delays and burnout.

Customers were unhappy with the average first-response time, since most car-rental issues need an immediate reaction from support.

Support staff burned out on repetitive questions — engagement fell, churn rose.

At night, customers got no responses because agents worked 09:00–23:00 Moscow time. In car rentals, customers book 24/7 — the absence of replies pulled service ratings down.

Goals

Prevent agent burnout from repetitive questions.

Cut first-response time to under 1 minute.

Speed up support during overnight hours.

Solution

Analyzed Localrent's customer-request corpus.

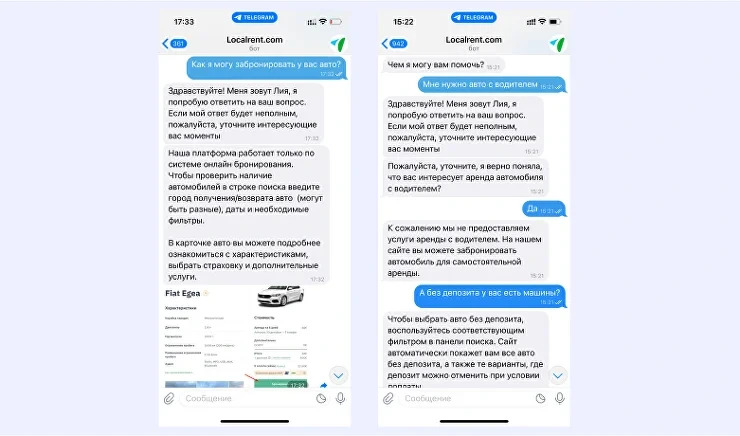

Split every request into topics (intents). One topic could account for 50+ phrasings of the same customer question, including misspelled or typo'd variants.

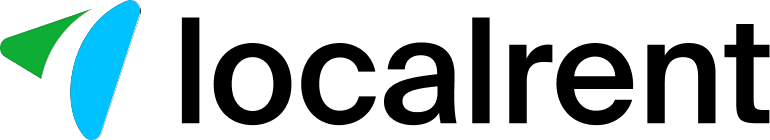

Trained Lia to handle requests like an agent in Localrent's tone of voice. Some customers didn't immediately realize the virtual helper was AI, not a person.

Integrated Lia with Omnidesk — Localrent's internal tool for cross-channel customer comms: Telegram, WhatsApp, Viber, and the website's live chat.

Automated 100% of support's first responses. The change gave agents time to think through individual customer issues.

Built an algorithm where Lia, before transferring a conversation to a human, gathers all the necessary info and hands the agent a ready case bundle with order numbers.

Built dynamic scenarios so Lia herself walks customers through booking specifics on certain routes.

Deployed an entity filter, minimizing agent handoffs by recognizing intents in messages. Even when a long request can't be parsed in full, if it contains a part the analysts wired up an AI filter for, Lia answers it instead of escalating.

Run ongoing fine-tuning, regularly expanding the topics and scenarios Lia can handle without an agent transfer.

Request and process analysis

To train the AI, we ingested hundreds of phrasings and conversations from the entire history of Localrent's agent–customer interactions.

Lia's response scenarios in the support chat

Omnidesk integration

Localrent already worked with the Omnidesk chat platform — agents received every inbound request through it. Our team connected Lia to Omnidesk and integrated the AI across messengers.

After several months of operation, coverage (sessions without an agent + sessions with scripted handoff to an agent) reached 80%.

Building the project on top topics

Our analysts built the Lia-for-Localrent bot in stages:

Identified the most frequent customer questions

Specialists analyzed the original request corpus and surfaced the highest-frequency queries. Among those, analysts isolated the repetitive routine questions agents on the brink of burnout were tired of answering.

Similar phrasings were grouped into a single intent (topic), and routing was configured against them.

Contents of the "Office address" intent

Analysts split every request into topics, each with 50 or even 100 variations of the same question — including errors and typos.

Implemented agent scripts in Lia's logic

Our analysts studied the scripts Localrent agents worked from and reflected them in the bot's customer responses.

Lia's text became as close to the rental-service agent voice as possible — building customer trust in support.

Across a project's lifetime, intents grow to 100+ phrasings each, raising recognition accuracy.

Fine-tuning existing topics and labeling new ones

Localrent moved to handling customer requests through Lia in December 2021. Throughout, our analysts continue studying the messages Lia couldn't answer and/or escalated.

Over 87 topics are now automated, and the customer team regularly updates them.

After Lia went live, Localrent's leadership measured unit economics and found LTV growth. Customers convert faster on responsive AI replies. In one example, a customer hit support 3 times — every interaction ended in a booked car.

Lia saves Localrent's customer-care time and accelerates customer interactions — freeing agents to take on more complex tasks.

Building dynamic scenarios

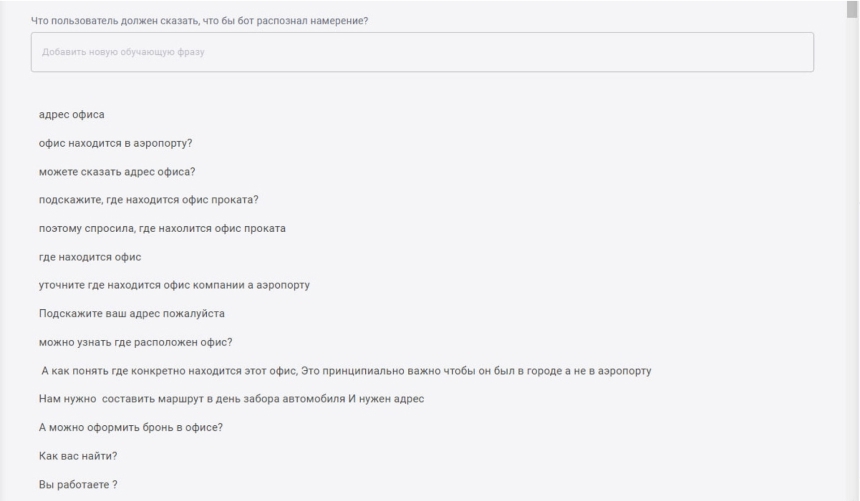

Dynamic behavior is implemented through complex scenarios with embedded code and dynamic variables (entities).

For example: dynamic scenarios kick in when customers ask about taking a rental car across borders. Here Lia works with context and entities — she identifies "from" and "to" directions, maps them to intents, and consults on the request.

Mapping data means Lia has pre-authored variants for the routes possible at Localrent ("from–to"). The dynamic part is Lia choosing which template to use based on the user's stated direction.

Results of Lia's deployment

Our team delivered the Lia-onboarding project turnkey. From the customer we needed only the request corpus. Everything else was on us — from development through deployment and ongoing tuning. Localrent specialists didn't touch configuration or technical issues — they got working customer-comms tools out of the box.

We also handle conversion tracking on Lia–customer dialogues into resolved issues. Our analysts regularly audit the customer journey and propose improvements through a dedicated project manager.

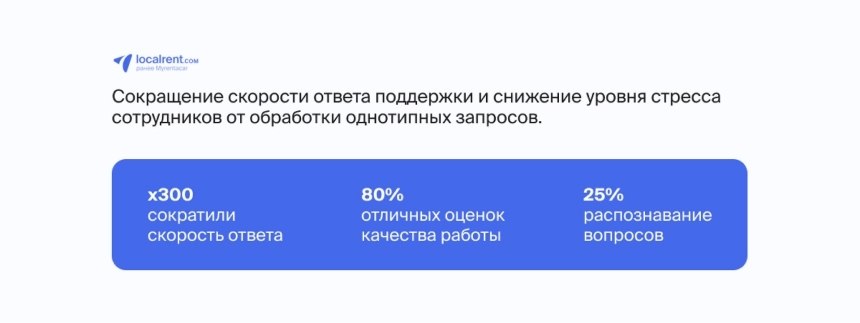

With Lia in place, response speed went up 5×. The AI replies in 0.5 seconds — faster than any human agent.

Now that Lia takes 100% of first responses, agents have time to think through their reply without rushing. That lifts resolution quality and gets customers to outcomes faster.

Beyond reducing agent volume, Lia improved consultation quality. After a chat the customer can rate the bot. In 2024, 80% of customers rated Lia chats "excellent." 45 of 51 customers were satisfied with support — driving more requests into sales conversion.

Localrent's unit-economics impact after Lia went live

Roadmap

"Our project is in continuous evolution, and the Lia team helps us keep it that way — together we always find interesting solutions and new growth points. It's incredibly energizing and motivating."

— Daria Churakova, Deputy Head of Support, Localrent

Adding more controls — for example, intent prioritization. Important when a customer sends two questions with different intents in one message; Lia answers the highest-priority one first.

Refining Lia's bot integration and adding images to scenarios.

With Lia, Localrent handles more customer requests without growing the support team. Issue resolution accelerated 5×, agents stopped living in constant stress, and they're pulled into conversations only for genuinely non-standard cases.

If you don't want to fall behind market leaders, switch to AI-powered chat work now. Lia's typical implementation in mid-market and SMB takes about 3 days; the investment recoups within the first 2–3 months.

For a free consultation with our team, leave a request in the form below. Our manager will follow up on a video call at your convenience and:

identify bottlenecks in your sales operation;

walk through which processes Lia can optimize;

break down how Lia helps you grow margin;

explain pricing tiers and implementation options for your business.